Grafana Assistant — Product Proposals

Grafana Assistant — Product Proposals

Two feature concepts that would make my day to day smoother.

Context

I truly admire the work being done at Grafana, especially the thoughtful way the team is integrating LLMs & agents into the platform. Rather than treating AI as an extension, Grafana Assistant feels thoughtfully designed around real workflows when I use it. That focus on practical, trustworthy (I can start an investigation and expect results) AI with genuine day-to-day utility is what strongly attracted me to using it on a daily basis.

I wrote some feature proposals to describe how I hope Grafana Assistant’s future looks like.

I have watched the ObservabilityCON session - “Grafana Assistant & Investigations” and read Maurice Rochau’s article on VMBlog regarding Observability in AI. I’ve attempted to steer clear of suggested roadmap features and perhaps present novel ideas that fall in line with Grafana Lab’s overarching goals for assistant. :)

Proposals

Feature 1 — Grafana Assistant CLI

Extending Grafana Assistant from a UI feature into a more agent accessible platform. This has been quietly released two days after I wrote this :p

View proposal

Outline

Grafana Assistant as an Intelligence API

Problem

Grafana Assistant today is primarily accessed inside the Grafana UI, intended for human driven investigations.

However, there is room for improvement in workflows where an agent is intended to steer the debugging and operations that follow. Not only to improve external agents' ability to correspond with Grafana Assistant but also allow developers who strictly stick to their terminal to code and use agent harnesses (Claude Code, Codex, etc) to have their agents lead those investigations.

Grafana Assistant is currently designed primarily for human facing interactions. However, developers who predominantly spend their time on their terminal would benefit strongly from a CLI interface that integrates right into their workflows.

Proposal

Introduce a Grafana Assistant Intelligence API, with an official CLI as the reference client.

This would allow external tools to query Grafana Assistant directly and receive structured investigation results.

Example: Terminal investigation

grafana-assistant ask "why did API latency spike in last 30m?"Returns structured output:

{

"summary": "...",

"likely_causes": [...],

"affected_services": [...],

"related_dashboards": [...],

"recommended_queries": [...]

}Why this matters now

The developer tooling ecosystem is undergoing a major workflow shift. Grafana Assistant already supports turning investigations into code changes using GitHub's MCP, however, opening support for developer first environments, i.e. devs prompting their agent to use Grafana Assistant from the terminal opens pathways to newer users.

Inspiration

The growth of CLI native agent harnesses is not new (OpenCode getting 100k GitHub stars in the span of a few months) and it is evident what follows that are tools that support these workflows:

Feature 2 — Prompt Linting

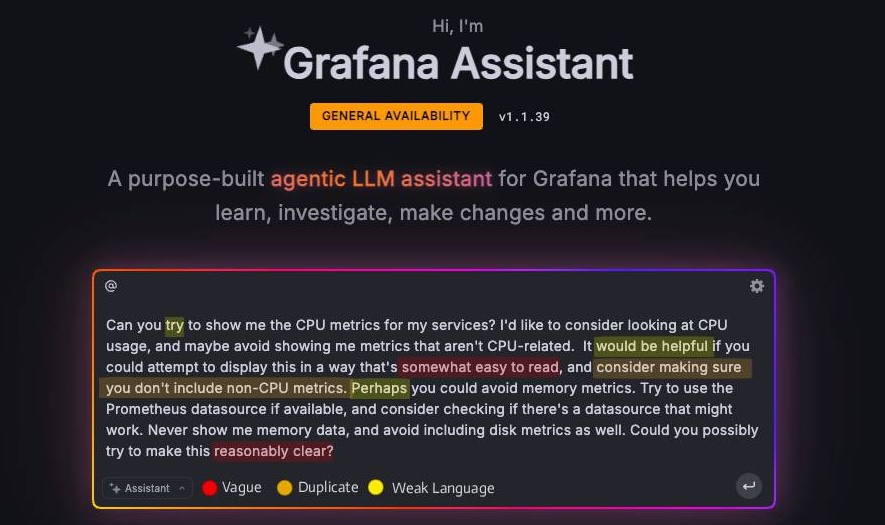

Ensure Grafana Assistant users are utilizing to its fullest by improving their prompts.

Concept UI mockup.

View proposal

Problem

Linting is a standard component of modern developer workflows. As prompt driven interactions become increasingly common, introducing prompt linting is a natural next step to help users get the most from Grafana Assistant, whose effectiveness depends heavily on prompt quality.

In practice, many users do not refine their prompts and are often:

- Vague

- Missing scope or

@context - Missing time ranges

- Missing environment context

Weaker prompts lead to:

- Slower investigations

- More work for the agent

- Inconsistent Assistant results

These cases reduce trust in Assistant generated results and create frustration with AI assisted observability, even when the root issue is prompt structure rather than model capability.

Proposal

Unlike traditional observability queries (PromQL, LogQL), prompt driven observability has no structured guidance for instruction quality. This feature would introduce an LSP backed prompt linting workflow to bridge the gap between bad and good prompts.

For a Grafana Assistant specific solution, use a hybrid approach: run static checks first, then use LLM analysis only when meaning inference is required.

Example: if prompt is "why is the registration gateway slow", run intent classification and slot extraction, then apply rule checks:

if intent = investigation and time.range = null -> warnSuggestions could include:

- Time window

- Environment

- Data source context

- Latency percentiles

Inspiration

This concept was actually inspired by a great PM at VS Code, Pierce Boggan, on prompt linting support for prompt files: microsoft/vscode#294986. I read his PR a couple of days ago and a lot of the thoughts/design resonated with me.

That implementation combines static analysis (for things like oversized prompts and vague wording) with LLM based analysis. A Grafana adaptation would follow the same pattern with observability specific checks.

just for fun.